In the last few weeks I’ve been really blown away by some really terrible marketing emails.

These include things like: “really enjoyed looking at your LinkedIn profile” and then using an old job title to reference my current role, through to other mails addressing me like I’ve never spoken before with the sender.

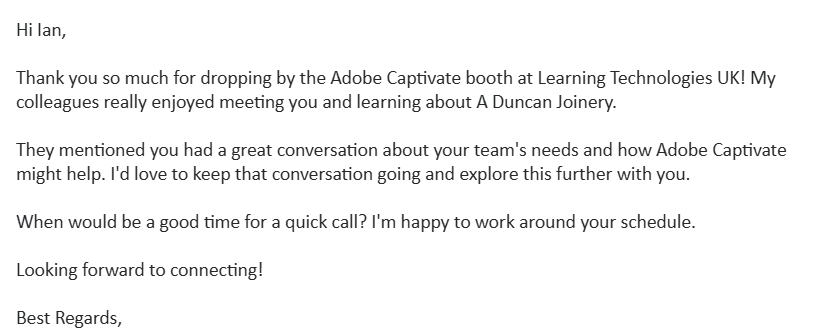

All in all, very bizarre – as I’ve said before, the better service in the learning industry seems to be where learning folks are involved in marketing and customer service. Anyways, the reason for the post is the below from Adobe – telling me how interesting it was to talk with me at an event I did not attend, about a company I have never worked for. Just wow: